Understanding the Core Elements of Technical SEO and Why They Form the Backbone of Modern Search Engine Optimization

Technical SEO is the invisible engine that powers your website’s visibility. While content attracts visitors and backlinks build authority, the core elements of technical SEO ensure that search engines can properly crawl, render, interpret, and index your content without friction.

Think of your website as a high-performance sports car. Content is the fuel. Backlinks are the horsepower. But technical SEO? That’s the engine design, the aerodynamics, and the transmission system working together to deliver peak performance.

What Technical SEO Really Means: Beyond Keywords and Backlinks?

After recent updates, SEO is no longer just about placing keywords on pages. Search engines now analyze:

- Page rendering quality

- Site speed and user signals

- Structured data clarity

- Mobile responsiveness

- Secure browsing via HTTPS protocol

Technical SEO ensures your site meets these advanced requirements.

How Search Engine Crawlers, Indexing Systems, and Page Rendering Work Together?

Search engines follow a 3-step process:

- Crawling – Discovering pages through links and XML sitemaps.

- Indexing – Storing and organizing content.

- Rendering – Processing JavaScript, CSS, and layout elements.

If any of these steps fail due to poor site architecture or blocked resources in robots.txt, your rankings suffer.

The Relationship Between Technical Infrastructure and Organic Visibility

Strong technical seo foundations improve:

- Crawl efficiency

- Indexation status

- SERP display quality

- User experience signals

And when search engines trust your infrastructure, rankings rise.

Why Technical SEO Has Become More Critical Than Content Alone in Today’s Algorithm-Driven Search Landscape?

Search engines now prioritize experience and performance. A fast, secure, mobile-friendly website consistently outranks a slower competitor, even with similar content quality.

Google’s Mobile-First Indexing and AI-Driven Ranking Systems

With mobile-first indexing, Google primarily uses your mobile version for evaluation. If your site lacks mobile responsiveness, you risk losing visibility.

AI-powered algorithms now analyze:

- Core Web Vitals

- Page rendering stability

- Duplicate content signals

- Canonical tags consistency

Technical precision is no longer optional, it’s mandatory.

How Core Web Vitals and Page Experience Signals Influence Rankings

Metrics like Largest Contentful Paint (LCP) and Cumulative Layout Shift (CLS) directly affect user engagement. Poor site speed increases bounce rate, reducing ranking potential.

The Business Impact of Poor Technical SEO on Traffic and Revenue

Slow sites lose customers. Broken links damage trust. Redirect chains waste crawl budget. Over time, revenue declines. Strong technical SEO equals measurable business growth.

Site Architecture Optimization Designing a Logical, Crawl-Friendly, and Scalable Website Structure

Site architecture determines how efficiently search engines explore your content.

Building a Hierarchical Site Architecture That Enhances Crawl Efficiency

Organize content into logical categories and subcategories. Keep essential pages within three clicks of the homepage.

Flat vs Deep Architecture: Which Structure Maximizes Indexation?

Flat architecture improves crawl efficiency. Deep structures often bury important pages.

Optimizing Category Pages and Content Silos for Authority Distribution

Content silos strengthen topical relevance and improve internal linking structure.

Internal Linking Strategies That Strengthen Topical Relevance and Crawl Depth

Strategic internal linking distributes authority and reduces orphan pages.

Anchor Text Optimization for Contextual Relevance

Use descriptive anchor text aligned with target keywords.

Eliminating Orphan Pages to Improve Indexation Status

Every valuable page should have at least one internal link.

Managing Crawl Budget to Ensure High-Value Pages Are Prioritized

Crawl budget is limited. Waste it, and search engines may ignore critical pages.

Identifying Low-Value URLs That Waste Crawl Budget

Remove thin content, filter pages, and duplicate URLs.

Using Robots.txt and Meta Robots to Control Crawl Flow

Use robots.txt carefully to block irrelevant sections while allowing important content.

Site Speed Optimization Improving Core Web Vitals, Page Rendering, and User Experience Signals

Site speed directly impacts rankings and conversions.

Measuring Site Speed Using Google PageSpeed Insights, Lighthouse, and Real User Metrics

Tools like Google PageSpeed Insights and Lighthouse provide actionable insights.

Focus on:

- Image compression

- Code minification

- Lazy loading

- CDN implementation

Reducing Server Response Time and Optimizing Hosting Performance

Choose high-performance hosting. Reduce Time to First Byte (TTFB).

Eliminating Redirect Chains and Minimizing HTTP Requests

The Hidden SEO Cost of Long Redirect Chains

Redirect chains slow down crawlers and users.

Optimizing JavaScript, CSS, and Image Compression

Reduce file sizes and defer non-essential scripts.

Implementing HTTPS Protocol and Security Best Practices to Build Trust and Protect User Data

HTTPS encryption is a confirmed ranking signal. It prevents data interception and improves credibility.

Best practices:

- Redirect HTTP to HTTPS with 301 status codes

- Fix mixed content warnings

- Renew SSL certificates promptly

Secure sites rank better and convert more visitors.

XML Sitemaps and Robots.txt Configuration Directing Search Engine Crawlers Effectively

Creating Optimized XML Sitemaps That Improve Discoverability

Submit sitemaps via Google Search Console.

Including Canonical URLs in XML Sitemaps

Avoid listing redirected or duplicate pages.

Updating Sitemaps for Dynamic and Large Websites

Automate sitemap updates.

Structuring Robots.txt to Prevent Accidental Deindexation

Careless robots.txt rules can destroy rankings overnight.

Blocking Low-Value Pages Without Harming Important Content

Exclude admin pages, not core content.

Testing Robots.txt Directives for Errors

Always validate before deployment.

Managing Meta Robots and Monitoring Indexation Status

Meta robots tags control indexing behavior.

Using Noindex, Nofollow, and Noarchive Strategically

Prevent thin pages from diluting authority.

Identifying Index Bloat and Resolving Deindexing Issues

Audit regularly to maintain clean indexation status.

Canonical Tags and Duplicate Content Control Consolidating Ranking Signals Across Similar URLs

Duplicate content splits ranking power.

What Causes Duplicate Content in Modern Websites

- URL parameters

- Session IDs

- Category filters

- HTTP vs HTTPS variations

Proper Implementation of Canonical Tags Across Variations

Declare preferred versions consistently.

Handling URL Parameters and Session IDs

Normalize parameters via canonical tags.

Cross-Domain Canonicalization for Syndicated Content

Protect original content ownership.

Mobile Responsiveness and Page Rendering Optimization for Mobile-First Indexing

Mobile responsiveness is mandatory.

Designing Fully Responsive Layouts That Adapt to All Screen Sizes

Use flexible grids and scalable images.

Ensuring Consistent Page Rendering Across Devices

Blocked CSS or JavaScript harms rendering.

Avoiding Hidden Content Issues on Mobile

Ensure mobile content matches desktop.

Testing Mobile Performance Using Search Console Tools

Regular testing prevents ranking losses.

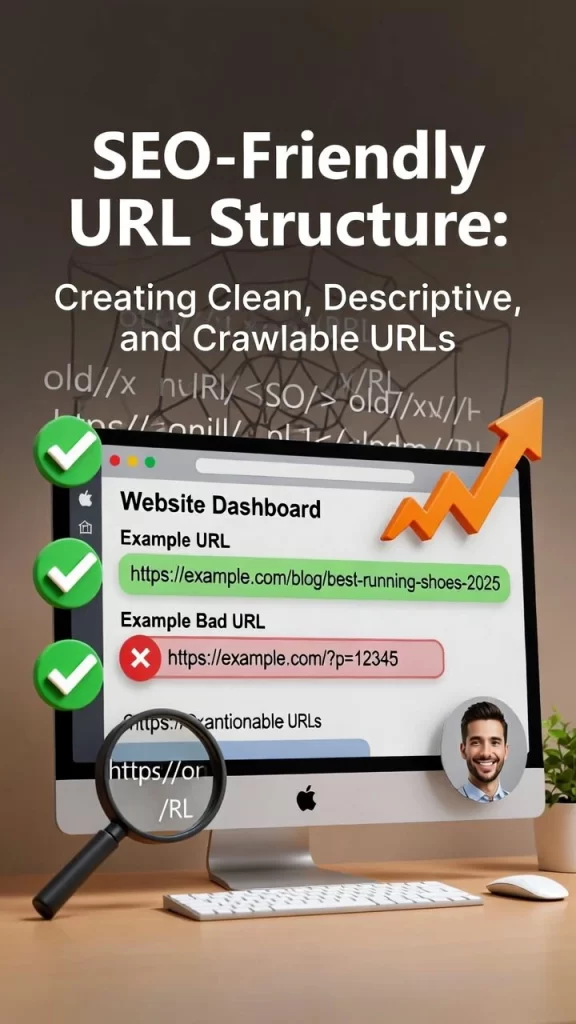

SEO-Friendly URL Structure Creating Clean, Descriptive, and Crawlable URLs

Clear URLs improve both users’ trust and crawl efficiency.

Best practices:

- Short and descriptive

- Hyphen-separated

- Avoid unnecessary parameters

- Maintain logical hierarchy

Structured Data and Schema Markup Enhancing SERP Visibility with Rich Results

Structured data helps search engines understand context. Use schema markup to qualify for rich results.

Understanding Structured Data and Its Role in Semantic Search

Schema clarifies entities, products, articles, and FAQs.

Implementing Schema Markup Using JSON-LD Format

JSON-LD is Google’s recommended format.

Article Schema for Content Pages

FAQ Schema for Enhanced SERP

Review and Product Schema for E-Commerce Optimization

Advanced International SEO with Hreflang Tags and Regional Targeting

Hreflang tags prevent international duplicate content issues. Correct implementation ensures proper regional targeting. Common errors include incorrect return tags and mismatched language codes.

Log File Analysis and Server Status Codes Diagnosing Crawl Behavior and Technical Errors

Log file analysis reveals how search engines interact with your site.

Using Log File Analysis to Understand Googlebot Activity

Identify crawl frequency and ignored URLs.

Monitoring HTTP Status Codes for SEO Health

- 200 – Success

- 301 – Permanent redirect

- 404 – Not found

- 500 – Server error

Fix errors immediately to protect crawl efficiency.

Monitoring and Improving Indexation Status for Maximum Search Visibility

Regularly audit indexed pages.

Fix:

- Crawled but not indexed issues

- Soft 404s

- Duplicate without user-selected canonical errors

Prevent index bloat by cleaning low-value pages.

Common Technical SEO Mistakes That Undermine Crawlability, Rankings, and Site Performance

Avoid:

- Blocking CSS or JS in robots.txt

- Ignoring mobile responsiveness

- Allowing redirect chains

- Neglecting HTTPS

- Forgetting to update XML sitemaps

Frequently Asked Questions About Core Elements of Technical SEO

1. What are the most important core elements of technical SEO?

Site speed, crawl budget, structured data, canonical tags, HTTPS, mobile responsiveness, and site architecture.

2. How does crawl budget affect large websites?

If wasted, important pages may not get indexed quickly.

3. Is structured data required for ranking?

Not mandatory, but it increases visibility via rich results.

4. How often should technical SEO audits be conducted?

Quarterly, or after major site updates.

5. Do canonical tags solve all duplicate content issues?

No, but they significantly reduce ranking dilution.

6. What tools help analyze log files and status codes?

Screaming Frog, Ahrefs, and Semrush are excellent options.

Mastering the Core Elements of Technical SEO for Sustainable Organic Dominance

The core elements of technical SEO are not optional enhancements they are mission-critical foundations. When site speed, crawl efficiency, structured data, canonical tags, and mobile responsiveness work together seamlessly, search engines reward your site with stronger visibility and higher rankings.

Technical excellence builds trust. Trust builds authority. Authority builds traffic.